Yuxuan Liu

Hi!

I am a first-year PhD student at UM CSE, working on HCI and accessibility. I am fortunate to be advised by Prof. Anhong Guo.

Publications

-

HandProxy: Expanding the Affordances of Speech Interfaces in Immersive Environments with a Virtual Proxy HandChen Liang, Yuxuan Liu, Martez Mott, and Anhong GuoIMWUT/UbiComp ’25

HandProxy: Expanding the Affordances of Speech Interfaces in Immersive Environments with a Virtual Proxy HandChen Liang, Yuxuan Liu, Martez Mott, and Anhong GuoIMWUT/UbiComp ’25Hand interactions are increasingly used as the primary input modality in immersive environments, but they are not always feasible due to situational impairments, motor limitations, and environmental constraints. Speech interfaces have been explored as an alternative to hand input in research and commercial solutions, but are limited to initiating basic hand gestures and system controls. We introduce HandProxy, a system that expands the affordances of speech interfaces to support expressive hand interactions. Instead of relying on predefined speech commands directly mapped to possible interactions, HandProxy enables users to control the movement of a virtual hand as an interaction proxy, allowing them to describe the intended interactions naturally while the system translates speech into a sequence of hand controls for real-time execution. A user study with 20 participants demonstrated that HandProxy effectively enabled diverse hand interactions in virtual environments, achieving a 100% task completion rate with an average of 1.09 attempts per speech command and 91.8% command execution accuracy, while supporting flexible, natural speech input with varying levels of control and granularity.

-

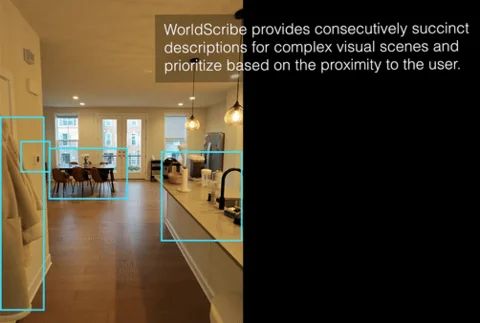

WorldScribe: Towards Context-Aware Live Visual DescriptionsRuei-Che Chang, Yuxuan Liu, and Anhong GuoUIST ’24

WorldScribe: Towards Context-Aware Live Visual DescriptionsRuei-Che Chang, Yuxuan Liu, and Anhong GuoUIST ’24Automated live visual descriptions can aid blind people in understanding their surroundings with autonomy and independence. However, providing descriptions that are rich, contextual, and just-in-time has been a long-standing challenge in accessibility. In this work, we develop WorldScribe, a system that generates automated live real-world visual descriptions that are customizable and adaptive to users’ contexts. WorldScribe’s description is customized to users’ intent and prioritized based on semantic relevance. WorldScribe is also adaptive to visual contexts, e.g., providing consecutively succinct descriptions for dynamic scenes, while presenting longer and detailed ones for stable settings. Additionally, WorldScribe is adaptive to sound contexts, e.g., increasing volume or pausing in noisy environments. WorldScribe is powered by a suite of vision, language, and sound recognition models. It presents a description generation pipeline that balances the tradeoffs between their richness and latency to support real-time usage. The design of WorldScribe is informed by prior work on providing visual descriptions and a formative study with blind participants. Our user study and following pipeline evaluation show that WorldScribe can provide real-time and fairly accurate visual descriptions to facilitate environment understanding that is adaptive and customized to users’ contexts. Finally, we discuss the implications and further steps toward making live visual descriptions more context-aware and humanized.

-

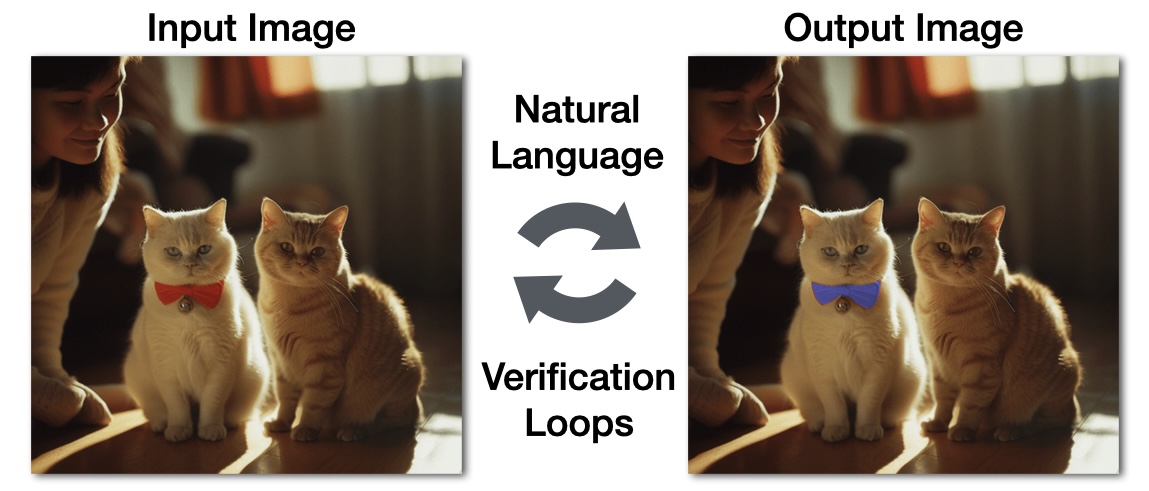

EditScribe: Non-Visual Image Editing with Natural Language Verification LoopsRuei-Che Chang, Yuxuan Liu, Lotus Zhang, and Anhong GuoASSETS ’24

EditScribe: Non-Visual Image Editing with Natural Language Verification LoopsRuei-Che Chang, Yuxuan Liu, Lotus Zhang, and Anhong GuoASSETS ’24Image editing is an iterative process that requires precise visual evaluation and manipulation for the output to match the editing intent. However, current image editing tools do not provide accessible interaction nor sufficient feedback for blind and low vision individuals to achieve this level of control. To address this, we developed EditScribe, a prototype system that makes image editing accessible using natural language verification loops powered by large multimodal models. Using EditScribe, the user first comprehends the image content through initial general and object descriptions, then specifies edit actions using open-ended natural language prompts. EditScribe performs the image edit, and provides four types of verification feedback for the user to verify the performed edit, including a summary of visual changes, AI judgement, and updated general and object descriptions. The user can ask follow-up questions to clarify and probe into the edits or verification feedback, before performing another edit. In a study with ten blind or low-vision users, we found that EditScribe supported participants to perform and verify image edit actions non-visually. We observed different prompting strategies from participants, and their perceptions on the various types of verification feedback. Finally, we discuss the implications of leveraging natural language verification loops to make visual authoring non-visually accessible.